Robots.txt file (also called the robots exclusion protocol or standard) is a tiny text file designed to regulate search engine crawling and other web crawlers. This file consists of the rules and instructions for search engine crawlers and bots whether the whole website needs to be crawled or a part of it.

So, here’s your complete guide on how to access, edit, and upload robots.txt file on your website.

Check whether your site has robots.txt

To check whether your site has robots.txt or not, just go to your website and hit the below URL. Robots.txt file lies in the public_html folder and can be accessed on your web browser.

www.yoursite.com/robots.txtIf this URL opens on your site, go and download your Robots.txt file from your server.

Download Robots.txt file on local PC

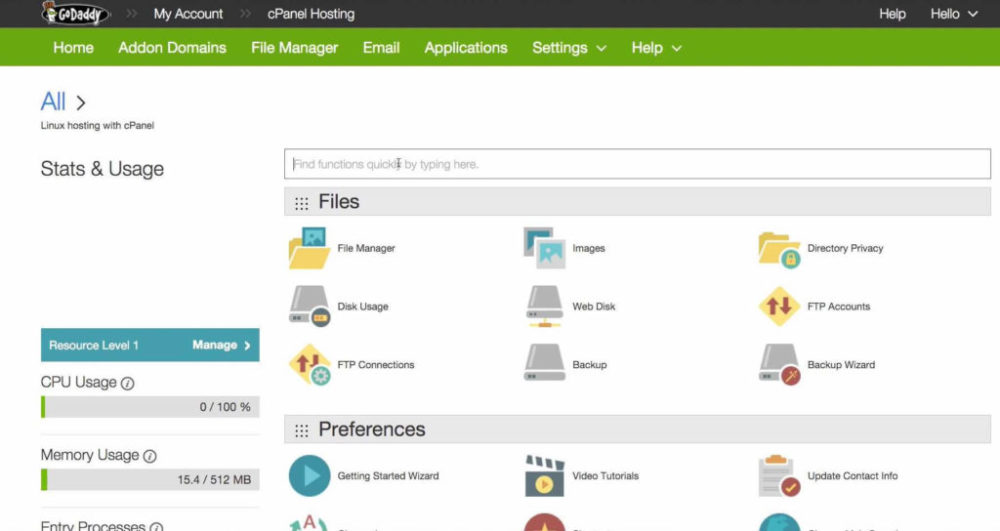

For downloading robots.txt file on your local computer, follow the following steps. I am using GoDaddy’s shared hosting panel to illustrate the process.

Step 1: Login to your C-panel.

Step 2: Find file-manager in your dashboard and open it

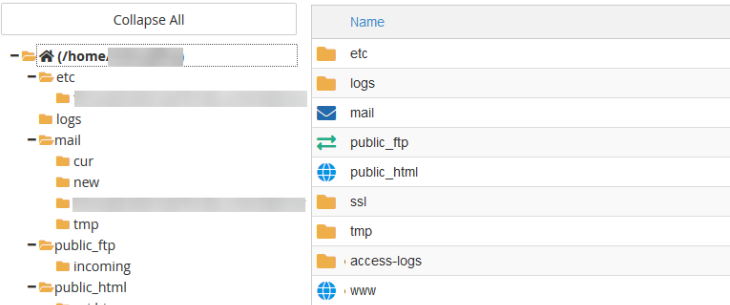

Step 3: Go to public_html folder and find robots.txt file

Step 4: When you click the robots.txt file, you can see download button at the top. You can download the file on your computer and can edit it on Notepad or Notepad++.

Step 5: Upload the edited file.

Step 6: Check and validate your robots.txt file.

Read these two articles to know more robots.txt

Robots.txt File to Disallow the Whole Website

Robots.txt to Disallow Subdomains